Kafka's Duplicate Message Problem

Your consumers are humming along at 10,000 messages per second. One crashes. Suddenly you've got duplicate messages, stalled partitions, and angry Slack pings from downstream.

That's consumer group rebalancing, and I think it's the most common Kafka production issue that nobody warns you about until you're already dealing with it.

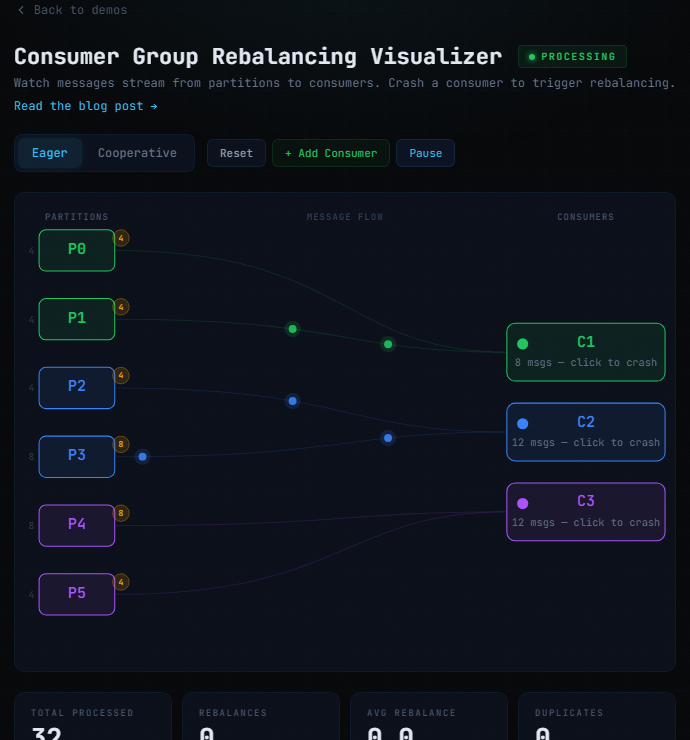

Try the interactive demo - crash consumers, compare eager vs cooperative rebalancing, and watch duplicates happen in real time.

What Is Rebalancing?

Consumers in the same consumer group split up the partitions of a topic between them. When something changes - a consumer crashes, one joins, partitions change - Kafka needs to redistribute. The problem is how: by default, every consumer stops while partitions get shuffled around. Full stop-the-world. Pretty aggressive default if you ask me.

What Triggers a Rebalance?

1. A Consumer Crashes or Gets Evicted

If a consumer doesn't send heartbeats within session.timeout.ms (default: 45s), the coordinator assumes it's dead. Here's the annoying version: a slow DB query blocks poll(), heartbeats stop, and Kafka kicks the consumer out - even though it was actively doing work. Nothing actually broke, it was just slow.

// This consumer will get evicted if processRecord() takes > 45 seconds

while (true) {

ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(100));

for (ConsumerRecord<String, String> record : records) {

processRecord(record); // Blocks for 60 seconds on a slow DB query

}

}

2. A Consumer Joins the Group

Every join triggers a rebalance. Rolling deployment of 10 consumers? That's 10 rebalances back to back. If you're on eager assignment, that's 10 stop-the-world pauses.

3. Partition Count Changes

Adding partitions triggers a full rebalance across the whole group.

Eager vs Cooperative Rebalancing

Eager Rebalancing (The Default)

With RangeAssignor or RoundRobinAssignor, all consumers give up all partitions and stop. One consumer out of twenty crashes? All twenty stop. That's what bothers me about this - it turns a single-consumer problem into a cluster-wide event.

// Eager rebalancing - stop the world

props.put(ConsumerConfig.PARTITION_ASSIGNMENT_STRATEGY_CONFIG,

RangeAssignor.class.getName());

Cooperative Rebalancing

With CooperativeStickyAssignor, only the affected partitions move. Everyone else keeps going. This is almost certainly what you want.

// Cooperative rebalancing - only affected partitions move

props.put(ConsumerConfig.PARTITION_ASSIGNMENT_STRATEGY_CONFIG,

CooperativeStickyAssignor.class.getName());

Trade-offs of Cooperative Rebalancing

It's not free, but the trade-offs are worth it in almost every case:

- Multiple rounds. Takes at least two rounds instead of eager's one. Total time can actually be longer.

- Brief partition gaps. Revoked partitions sit unassigned between rounds.

- All-or-nothing migration. You can't mix eager and cooperative in the same group.

- Quieter failures. A stuck rebalance on a subset of partitions can go unnoticed longer.

The Duplicate Processing Trap

Here's where it gets really ugly. When all consumers give up all partitions at once, every single one has a window of uncommitted work that gets replayed. Twenty consumers each holding uncommitted messages, one crashes, and now you've got 20 partitions worth of duplicates. Not just the dead consumer's - everyone's.

With auto-commit (also the default), offsets get committed every 5 seconds. Anything processed between the last commit and the rebalance? Re-processed by the new owner. Eager rebalancing makes this as bad as possible because it forces every partition to change hands. Cooperative limits the damage to just the affected ones.

So you've got eager rebalancing plus auto-commit. Both are defaults. Both should be changed for anything you care about. Honestly, I think this combination is one of the bigger footguns in Kafka's default config.

The Fix: Manual Offset Management

while (true) {

ConsumerRecords<String, String> records = consumer.poll(Duration.ofMillis(100));

for (ConsumerRecord<String, String> record : records) {

processRecord(record);

consumer.commitSync(Collections.singletonMap(

new TopicPartition(record.topic(), record.partition()),

new OffsetAndMetadata(record.offset() + 1)

));

}

}

If you need more throughput, batch your commits:

int count = 0;

Map<TopicPartition, OffsetAndMetadata> offsets = new HashMap<>();

for (ConsumerRecord<String, String> record : records) {

processRecord(record);

offsets.put(

new TopicPartition(record.topic(), record.partition()),

new OffsetAndMetadata(record.offset() + 1)

);

if (++count % 100 == 0) {

consumer.commitAsync(offsets, null);

offsets.clear();

}

}

consumer.commitSync(offsets);

Configuration Trade-offs

| Configuration | Throughput | Duplicate Risk | Best For |

|---|---|---|---|

| Auto-commit + eager | High | High | Idempotent consumers, analytics |

| Manual sync per message | Low | Low | Financial transactions, payments |

| Manual async + cooperative | High | Medium | Event logging with deduplication |

| Batched commits + sticky | Very High | Medium | High-volume analytics pipelines |

Monitoring

Three metrics that actually tell you something:

rebalance-latency-avg- If this is going up, your consumers are spending more time rebalancing than processing. Not great.assigned-partitions- If it's bouncing between 0 and N, you've got a consumer that keeps crashing and rejoining.commit-latency-avg- Slow commits can trigger evictions, which cause more rebalances. It's a fun feedback loop.

The Checklist

- Switch to

CooperativeStickyAssignor. Just do this. Should be the default for any new consumer group. - Turn off auto-commit. Set

enable.auto.commit=falseand handle offsets yourself. - Tune your timeouts. Find the balance between catching real failures and tolerating slow processing.

- Make consumers idempotent. Deduplication keys, upserts instead of inserts.

- Watch your rebalance frequency. More than a few per hour and something's wrong.

Key Takeaways

- Rebalancing will happen. Design for it instead of hoping it won't.

- Switch to cooperative rebalancing. There's almost no reason to keep eager. It turns one failure into a cluster-wide pause.

- Manage offsets yourself when you care about correctness more than raw throughput.

- Make your consumers idempotent. Even the best offset strategy has edge cases. If duplicates are safe, rebalancing goes from incident to inconvenience.

Dig Deeper

- KIP-429: Kafka Consumer Incremental Rebalance Protocol - the proposal that introduced cooperative rebalancing

- Confluent: Cooperative Rebalancing in Practice - Confluent's deep dive on incremental cooperative rebalancing

- Apache Kafka Consumer Javadoc - official consumer API docs covering offset management and assignment strategies

- Kafka: The Definitive Guide, Chapter 4 - thorough coverage of consumer groups and partition assignment

- KIP-345: Introduce static membership - reduces rebalances during rolling deploys with

group.instance.id